Privacy by Design: Edge AI for Protecting User Data in Smart Home Devices

The Privacy Paradox of Smart Home Monitoring

Smart home devices promise incredible insights, tracking health patterns, detecting anomalies, providing peace of mind. But there's an uncomfortable truth most companies don't talk about: the more sensors you pack into a device, the more invasive it becomes.

A camera watching your pet is also watching your home. A microphone listening for distress sounds is also hearing your conversations. The data necessary for intelligent monitoring is the same data that represents your most intimate privacy.

At Hoomanely, we build smart pet feeding bowls called EverBowl-devices packed with cameras, thermal sensors, microphones, and weight sensors to monitor pet health. We faced a fundamental question: how do you build a device that needs to see and hear everything, while guaranteeing it captures nothing it shouldn't?

This is the story of how we implemented privacy by design through edge AI, processing data locally, filtering aggressively, and ensuring that what leaves your home is only what's necessary for your pet's health. No compromises.

The Problem: Sensors Don't Know What Matters

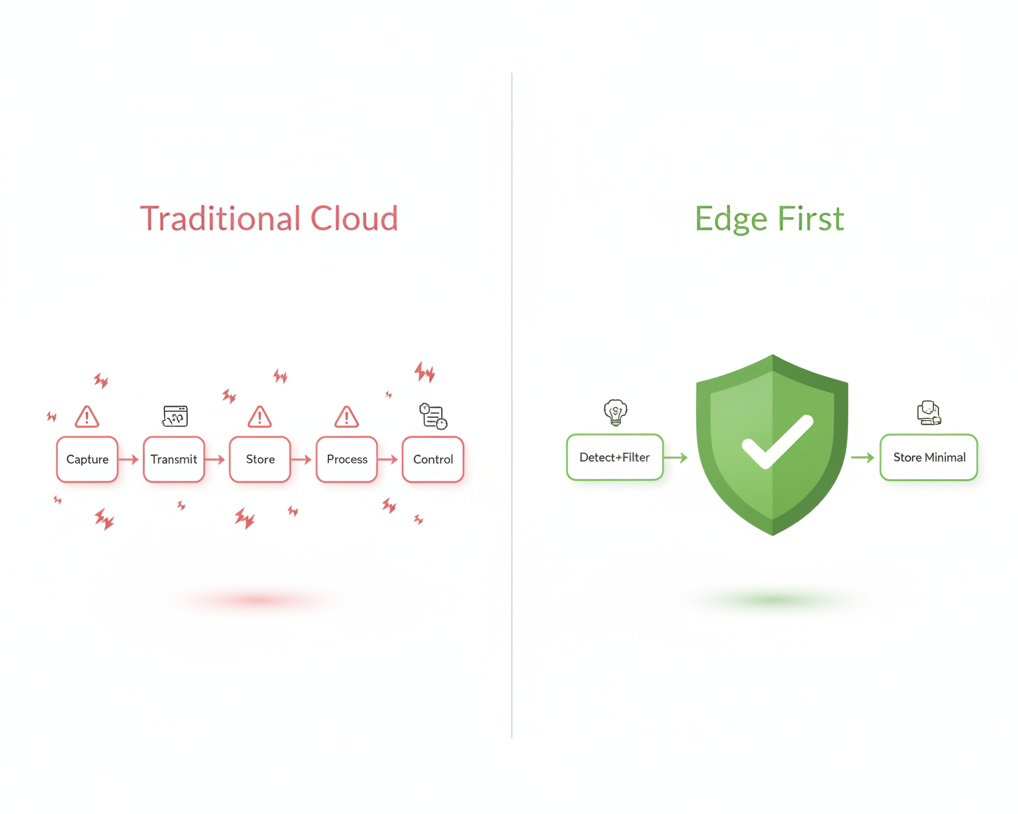

Traditional IoT devices operate on a simple principle: capture everything, send it to the cloud, process it there. It's architecturally simple and computationally easy. It's also a privacy nightmare.

Consider what our sensors could theoretically capture:

Camera: Your entire home layout, family members, guests, daily routines, security vulnerabilities.

Microphone: Private conversations, phone calls, voices of everyone in your household, sounds that reveal when you're home or away.

Thermal sensors: Heat signatures of people and pets, movement patterns, occupancy data.

None of this information is necessary for pet health monitoring. But without intelligent filtering at the edge, all of it gets captured and transmitted simply because the sensors don't know the difference between "dog eating" and "human walking by."

The standard industry approach, capture everything, filter in the cloud, means sensitive data traverses your network, hits external servers, and exists in logs and databases you don't control. Even with encryption and access controls, the data exists. That's the risk.

We needed a fundamentally different architecture.

The Architecture: Intelligence at the Edge

Our solution centers on a simple principle: the device should only remember what matters, and it should decide what matters locally.

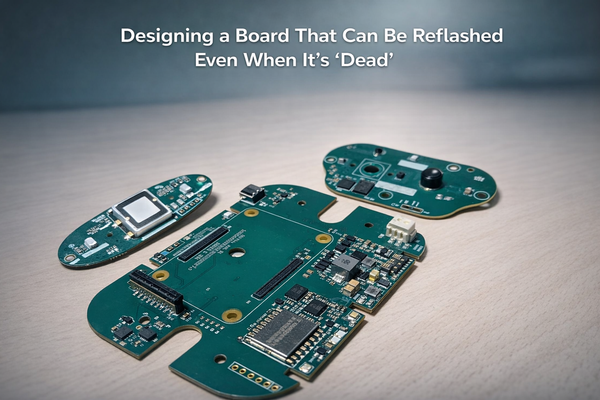

We built Everbowl around the STM32 N6 chip - a powerful system-on-module with an integrated AI accelerator. This gives us the computational headroom to run sophisticated ML models directly on the device, with inference times fast enough to make real-time decisions about what to capture and what to ignore.

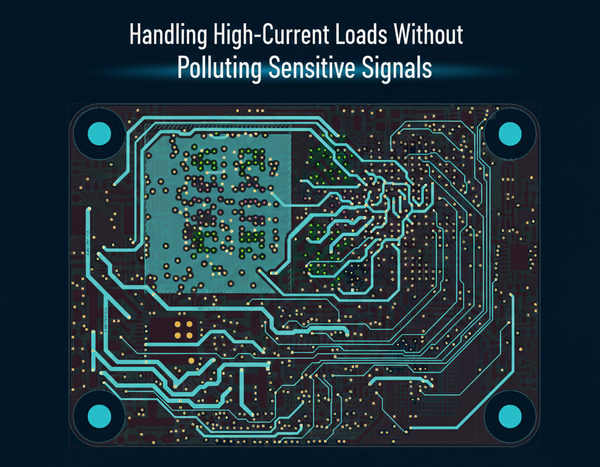

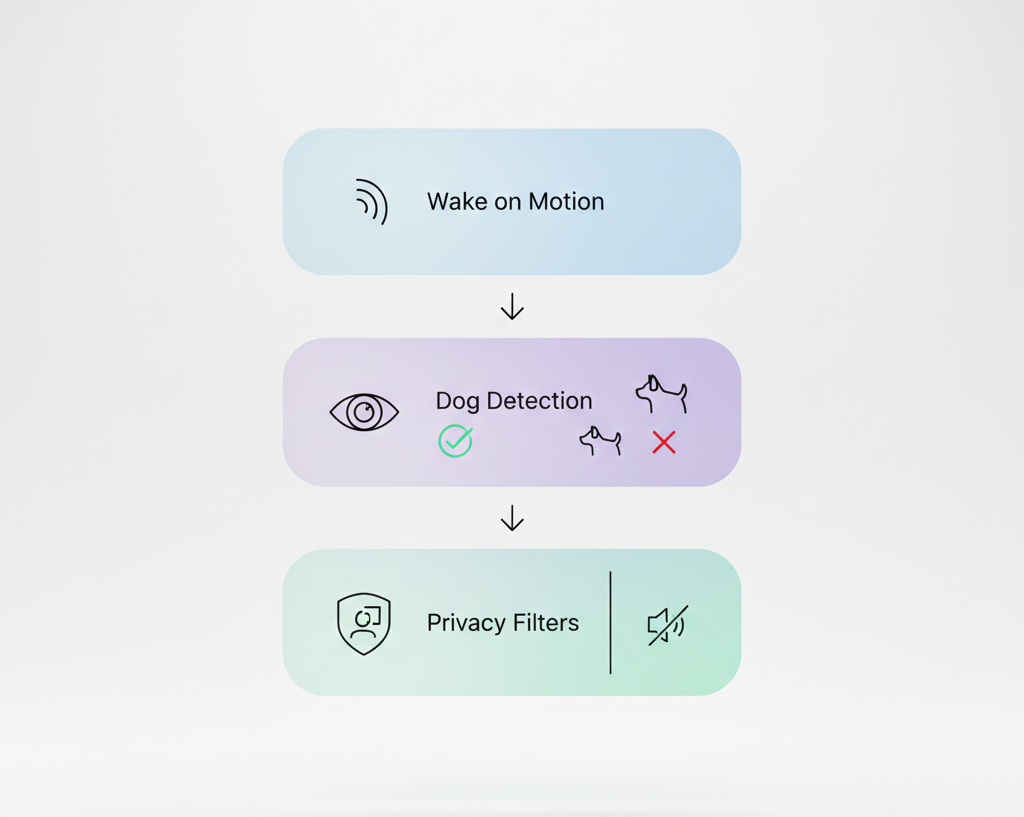

The architecture consists of three defensive layers, each running on-device:

Layer 1: Proximity-Triggered Activation

The device doesn't continuously record. Instead, it uses low-power proximity detection to wake up only when something approaches the feeding area.

When proximity is detected, the system activates the camera and immediately runs a lightweight computer vision model: dog detection.

This model answers one question: Is there a dog in frame?

If yes, proceed to capture. If no, discard the frame and return to sleep. No images of humans walking by. No recordings of family members in the kitchen. The device learns to ignore everything except its intended subject.

This first layer alone eliminates the vast majority of unnecessary data capture. In our testing, proximity triggers fire dozens of times per day, but only a small fraction result in actual dog detection and data storage.

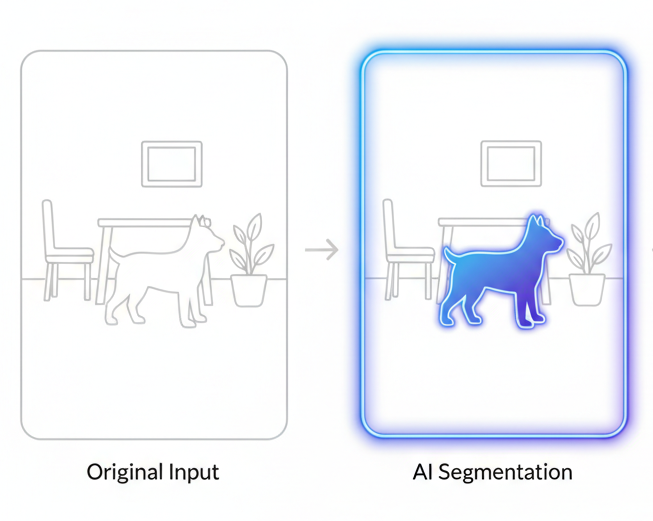

Layer 2: Segmentation and Masking

Even when a dog is present, the frame may contain sensitive background information, your home layout, objects, other people in the background.

Before storing any image, we run a real-time segmentation model on the device. This model identifies the precise pixel boundaries of the dog and masks everything else in the frame.

The stored image shows only the pet, with the background replaced by a neutral mask. The device literally cannot see your home, it sees only what's biologically relevant to pet health monitoring.

This segmentation happens entirely on the N6's AI accelerator. We've optimized the model architecture to run inference in milliseconds, introducing negligible latency between capture and storage. From the user's perspective, the system is instantaneous. From a privacy perspective, the background never existed.

Layer 3: Audio Filtering for Human Voice Removal

Audio presents a unique challenge. Pet health monitoring requires capturing sounds, coughing, wheezing, unusual vocalizations. But microphones are indiscriminate. They capture human speech with the same fidelity.

Our third layer runs an audio classification model on-device that identifies and filters human voice frequencies before storage.

The model is trained to recognize spectral patterns characteristic of human speech, formants, pitch ranges, phonetic structures. When these patterns are detected, those frequency bands are attenuated or removed entirely from the stored audio.

What remains are the sounds we actually need: pet vocalizations, eating sounds, environmental audio relevant to health monitoring. What's filtered out: your conversations, phone calls, any identifiable human speech.

This processing happens in real-time as audio is captured. The raw, unfiltered audio stream never touches storage. By the time data is written to flash, it's already been privacy-filtered.

Why Edge Processing Changes Everything

The critical insight is that data privacy isn't about access controls, it's about data minimization.

Traditional cloud-first architectures rely on protecting data after it's been captured. Encryption, authentication, strict access policies, all important, but all reactive. The data exists somewhere, and where data exists, it can be breached, leaked, subpoenaed, or misused.

Edge processing inverts this model. We prevent sensitive data from ever existing in the first place.

Consider the attack surface:

Cloud-first approach: Capture → Transmit → Store → Process → Apply access controls. Every step is a potential vulnerability. Network interception, server breach, database leak, insider access, third-party processors.

Edge-first approach: Detect → Filter → Process → Store only what's relevant. Sensitive data never enters the pipeline. There's nothing to intercept, nothing to breach, nothing to leak.

This isn't just theoretically more secure, it's architecturally more secure. You can't steal data that doesn't exist.

The Technical Challenges We Solved

Building this system wasn't straightforward. Edge AI for privacy introduces constraints that don't exist in cloud architectures.

Model Optimization for Real-Time Inference

Running segmentation and audio filtering models on embedded hardware requires aggressive optimization. Our N6 accelerator is powerful, but it's not a cloud GPU.

We quantized models from 32-bit floating-point to 8-bit integer representations, reducing model size and inference time without meaningful accuracy loss. We pruned unnecessary layers and fine-tuned architectures specifically for the inference patterns we needed.

The result: dog detection runs in under 50ms, segmentation completes in under 100ms, and audio filtering processes in real-time with imperceptible latency. These numbers were critical, any perceptible lag would degrade user experience and make the system feel "slow" despite its privacy benefits.

Power Management for Always-Ready Systems

Edge processing is computationally expensive. Running ML models continuously would drain power and generate heat in a device that sits on someone's kitchen floor.

Our solution was intelligent duty cycling. The system stays in deep sleep most of the time, waking only on proximity events. When awake, it processes aggressively to make decisions quickly, then returns to sleep.

This gave us the best of both worlds: instant responsiveness when needed, minimal power consumption when idle. Users never wait for the device to "wake up," yet battery life remains practical for a plugged-in device with backup power.

Balancing Privacy and Functionality

The hardest challenge wasn't technical, it was philosophical. Where do you draw the line between privacy and functionality?

For example: should we blur human faces if they appear in frame with a dog, or reject the entire frame? The former preserves more context for health analysis but risks storing identifiable features. The latter is more private but might miss medically relevant interactions.

We chose aggressive privacy every time. If a human is in frame, the segmentation model masks them entirely, just like the background. We'd rather miss an edge case than compromise on our privacy commitment.

These decisions don't have objectively correct answers. But having a clear principle, "when in doubt, protect privacy", made them tractable.

The Results: Privacy Without Compromise

After deploying this architecture across our user base, the metrics speak for themselves:

Data reduction: Over 90% of proximity triggers result in no data storage because no dog is detected. The system ignores the vast majority of activity in your home.

Background privacy: 100% of stored images have backgrounds masked. Not a single frame contains identifiable home layout or objects.

Audio privacy: Human voice filtering removes identifiable speech from all stored audio while preserving pet vocalizations and environmental sounds.

Performance: End-to-end latency from proximity detection to stored, privacy-filtered data averages under 200ms. Users experience the system as instantaneous.

User trust: Post-deployment surveys show that users who understand our privacy architecture are significantly more comfortable with in-home monitoring. Privacy isn't just good ethics, it's good product design.

Key Takeaways

Privacy by design isn't a feature, it's an architecture. You can't bolt privacy onto a system that captures everything. It must be fundamental to how data flows through your device.

Edge AI enables true data minimization. Processing locally means sensitive data can be filtered before it ever enters storage or transmission. This is qualitatively different from encrypting data that already exists.

Performance and privacy aren't mutually exclusive. With thoughtful optimization, edge processing can be faster than cloud processing while being dramatically more private.

Explicit privacy decisions build user trust. When users understand that your device literally cannot see their home or hear their conversations, they trust it in ways no privacy policy can achieve.

Optimization is the price of edge intelligence. You'll spend more time on model compression, quantization, and inference optimization than you would in a cloud architecture. It's worth it.