AI

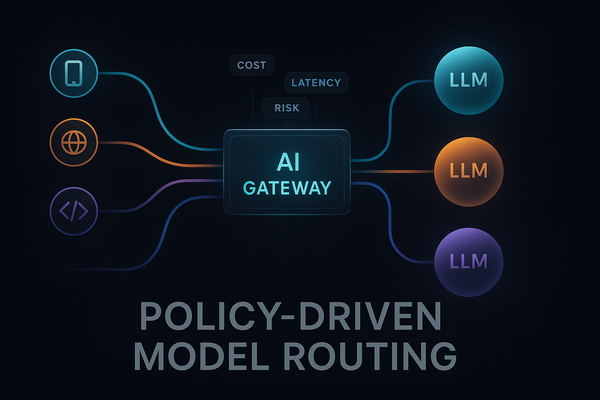

Policy-Driven Model Routing: Selecting the Right LLM Per Request

The first LLM integration is usually simple: you pick one provider, one model, one API key, and ship. The second and third are where things start to hurt. Suddenly you have a “cheap” model for bulk tasks, a “smart” model for critical flows, maybe a vision model, maybe a provider