AI

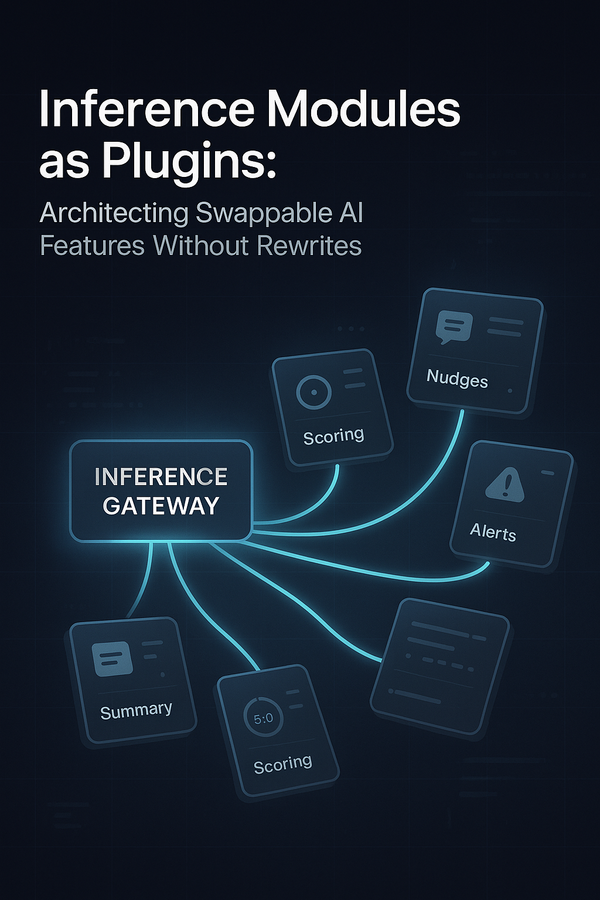

Inference Modules as Plugins: Architecting Swappable AI Features Without Rewrites

Most teams add AI the same way they add any new feature: ship a model, wire an endpoint, move on. A few months later, you’re juggling three versions of the “scoring service”, five subtly different summarizers, and a bundle of feature flags nobody fully trusts. Changing one model feels