AI

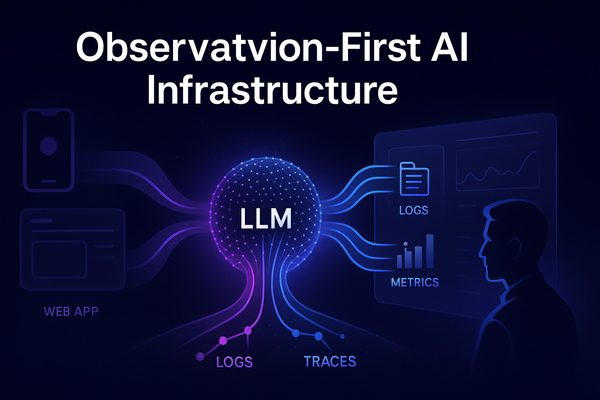

Observation-First AI Infrastructure for LLM-Powered Systems

When an AI feature behaves well, it feels effortless: the user asks a question, the model replies, and everything “just works.” But under that smooth surface, LLM calls are some of the most complex operations in your stack: huge prompts, variable latency, opaque provider behavior, and costs that can drift